Member-only story

CNN with an accuracy of 99%

Digit recognition

Well, all of us definitely feel good to achieve high in all we do, and this competition helped me experience this on Kaggle.

This article is gonna summarize what this competition on Digit Recognition using CNN’s is all about and how you need to submit your predictions in the form of a csv file on Kaggle.

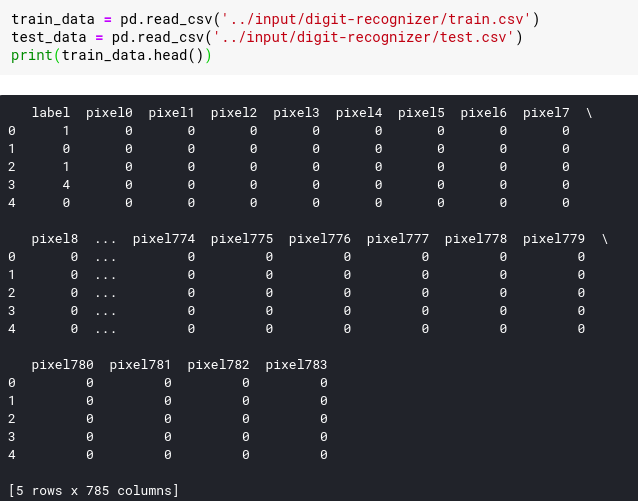

Loading and understanding data

The data files contain gray-scale images of hand-drawn digits, from zero through nine.

As seen in the output, the training data set has 785 columns. The first column, called “label”, is the digit that was drawn by the user. The rest of the columns contain the pixel-values of the associated image.

These values are integers between 0 and 255 inclusive and they indicate the lightness or darkness of that pixel, with higher numbers meaning darker.

The test data set is the same as the training set, except that it does not contain the “label” column.